AI for grant funding NGOs: Three ways Artificial Intelligence can help measure impact

Categories:

Created using Dall・E 3

Organisations that fund programs, or perform or fund research are naturally interested in the impact their funding is having, but tracking and measuring impact can be extremely challenging.

Some of the reasons for this are that Impact can mean different things for different grants and organisations, and the full impact of funding may not be apparent for a long time after the funding is distributed.

At Mantis, we specialise in Natural Language Processing (NLP) — the field of AI concerned with processing human language and documents. So, when we work with NGOs to help measure their impact it tends to be using written documents such as policy documents, mainstream media, academic publications, or some other type of document entirely.

In this blog we’ll look at three ways that Artificial Intelligence (AI) can be used to measure the impact of funding, based on lessons we’ve learned working with some of the world’s biggest grant funders, such as The Wellcome Trust.

1. Finding Impactful needles in haystacks

Created using Dall・E 3

The first step of looking for evidence of impact in written sources is to collect candidate documents that might point to the impact a grant has had. There are a number of ways we might go about this, but we usually end up with a collection of many thousands to millions of candidate documents, of which usually only a proportion are relevant to us.

The naive approach to finding the relevant documents is to use keyword searches, but this usually results in a lot of false positives: documents which contain relevant keywords, but are not actually relevant.

Instead, we can use AI as a sophisticated filter to find documents that really are relevant, including those that don’t contain any relevant keywords. We can do this by using a technique called semantic similarity. This is where we compare the meaning of documents to see if the overall meaning is similar to what we are looking for.

Another approach is to use an AI model to categorise the documents by applying “tags”. This can be a simple yes/no indicating whether the document is relevant, or it could be a comprehensive system of tags such as Medical Subject Heading tags. In either case the aim is simply to identify relevant documents based on the tags the AI applies, so that we can progress to the next stage with a smaller subset of all the documents that we have collected.

2. This isn’t the Jane Smith you’re looking for

Once we have collected and filtered the documents that are relevant to our subject of interest, we can look at the documents in more detail. The most straightforward evidence of impact is when the work that is funded is mentioned or cited.

A mention could be something in a mainstream media article such as:

“Researchers at the University of Cambridge discovered a groundbreaking new vaccine for Malaria…Dr. Jane Smith explained the vaccine was developed with mRNA technology…the research was funded by the Fictional institute”.

As before, we can get some of the way just by searching for keywords, but we will run into the same issues we highlighted already, and it assumes that we know everything we want to search for in advance, which may not be the case.

Instead, we can use an AI to both identify the sorts of things we want to find: techniques, collaborators, institutions, locations, etc. and to ensure that the mention is referring to our “Jane Smith”, not another person who just happens to have the same name. The Wellcome Trust have written a blog post about this.

3. Not all citations are equal

Like mentions, academic citations are an important way of measuring the reach of research that was funded by a grant. In academic journals, citations and their accompanying references are generally well structured, making analysis simple. In fact, academics already generate all sorts of metrics to measure impact of academic publications — for example journal Impact Factor, a measure of how impactful an academic journal is based on how often it is cited.

Not everyone is as diligent when it comes to references though, and in some documents, particularly policy documents created by national governments, the citations can be poorly structured making it hard to process automatically.

AI can help us here to detect citations and references, even if they are badly formatted, and extract the individual components such as author names, title, publication, etc., making the process of linking the reference to the original research article trivial. We previously worked with The Wellcome Trust on a model to do exactly this, which is available openly.

The other way that AI can help here is to confirm that a mention or citation is indicative of real impact. Consider for example:

Dr. Jane Smith was forced to retract publications relating to her research on mRNA vaccines…

Or

The project funded by the Fictional Institute was considered to have no impact on the prevalence of Malaria in The Fictional Republic of Fictionia.

Neither of these examples are indicative of the positive impact that we are usually asked to measure, but AI can help us to detect and discard them — or indeed report them.

To conclude

To round this up, we’ve gone through some of the ways that AI can be helpful when trying to measure the impact of grant funding. We’ve just scratched the surface here, but these are some of the common tasks that we find ourselves performing for NGOs again and again.

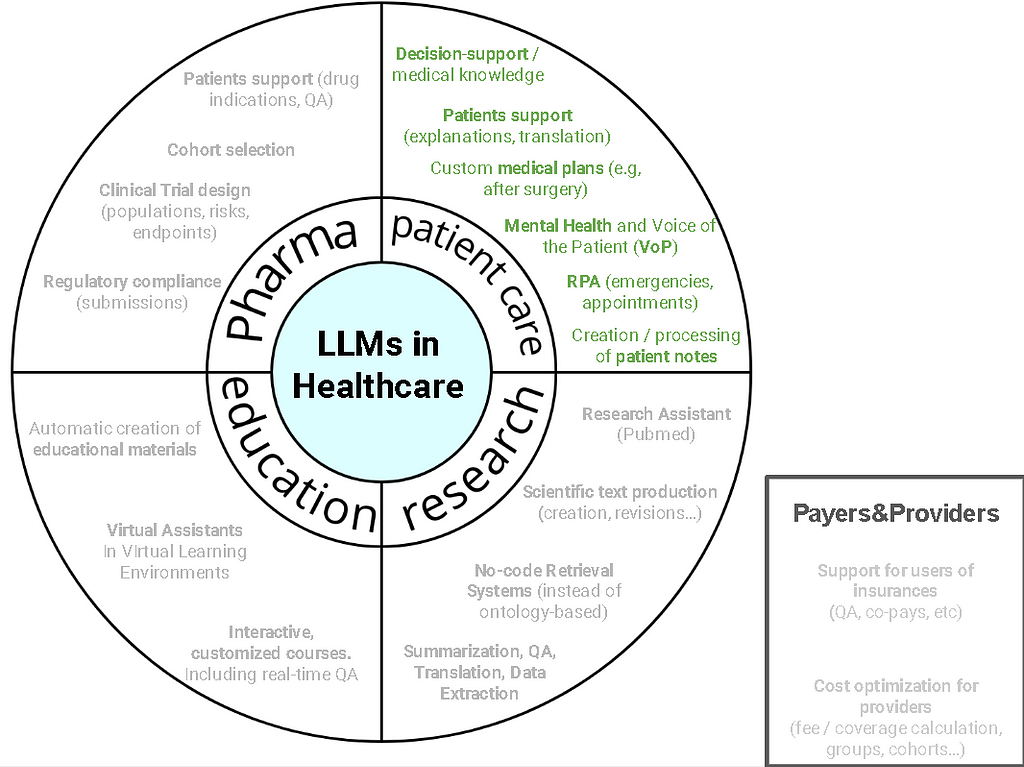

These are all examples of “extractive” AIs which extract information from data. In a future blog post we’ll look at the ways the latest generative AI can be used by NGOs to measure impact.

In the meantime, if you have any questions or comments about this post, or want to discuss other ways that AI can help to track impact, please feel free to get in with us at hi@mantisnlp.com.

AI for grant funding NGOs: Three ways Artificial Intelligence can help measure impact was originally published in MantisNLP on Medium, where people are continuing the conversation by highlighting and responding to this story.